Reinforcement Learning: Concepts Driving Intelligent Decision-Making

Introduction

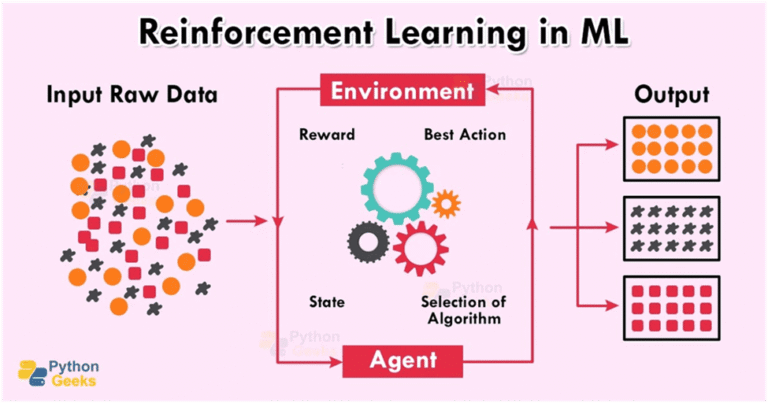

Reinforcement Learning is a fundamental concept in machine learning, referring to the process by which intelligent agents optimize their actions through interactive experiences with their surrounding environment. In the issued General Overview, the improvement of decision-making systems when individuals are rewarded or penalised for their actions is demonstrated. The key advantage of Reinforcement Learning is that it does not require the use of labelled training data, meaning the agent learns from experiences rather than a supervised learning technique. This General Overview is always necessary for comprehension when studying adaptive systems working under dynamic and unforeseeable environments.

Major Elements of Reinforcement Learning

At the reinforcement learning core, there are some key elements that determine how this learning will happen. In this case the Reinforcement Learning Agent represents the Decision Maker, while the Environment represents the system that the Reinforcement Learning Agent affects. The Reinforcement Learning Agent takes action, perceives the current situation, and then receives a reward.

The agent’s optimal outcome entails maximizing cumulative rewards. This approach defines the reinforcement learning technique as opposed to other short-run optimization techniques. Policy and value function concepts define the agent’s movements, expressed by linking states to actions. Value functions approximate expected rewards in states or a series of actions.

Learning Process and Algorithms

The learning technique of reinforcement learning is iterative and involves learning through experience. The agent searches for ways to explore a new approach to an action, and it also exploits a well-known action with a high reward. The technique of balancing the exploitation and exploration of an action is one of the main tasks of reinforcement learning.

Various algorithmic techniques include value-based methods like Q-learning, policy-based methods that involve optimising action selections, and actor-critic methods that involve the combined use of value-based and policy-based methods. These algorithms allow the system to learn and adapt based on changing environmental conditions.

Applications Across Industries

Applications of reinforcement learning abound. In robotics, it helps learn complex motor skills as well as ways to navigate. In the financial sector, models based on reinforcement learning help with optimising investment portfolios and algorithmic trading by learning from market dynamics.

The healthcare field uses reinforcement learning to develop a unique way to provide individualized patient care. In terms of technology, reinforcement learning can also create systems for recommending products (recommendation engines), driving vehicles (self-driving cars), and creating computer programs that play games at a higher level than humans (game-playing programs) through the ability to learn from experience.

Advantages and Limitations

One of the most important strengths of reinforcement learning is the possibility to deal with sequence decisions when there are no pre-programmed rules. It works well when the environment consists of a sequence of related actions.

However, reinforcement learning also has some difficulties. The training process can be computationally expensive. If reward functions are designed improperly, reinforcement learning agents could exhibit undesired behaviours. Moreover, it is still challenging to provide guarantees on risk and interpretability.

Conclusion

To sum up, Reinforcement learning (RL) is critical for creating self-learning and self-adaptive systems that offer machine learning capabilities to enable machines to learn based on previous actions/experiences. The goal of this General Overview is to increase your understanding of the basic elements of RL as well as the methods being used to build and implement intelligent systems and how these systems influence and make decisions based on data.

References

[1] R. S. Sutton and A. G. Barto, Reinforcement Learning: An Introduction, 2nd ed. Cambridge, MA, USA: MIT Press, 2018.

[2] OpenAI, “Research on Reinforcement Learning,” OpenAI Publications, 2023. [Online].

Available: https://www.openai.com/research

[3] IBM Research, “What is Reinforcement Learning?” IBM, 2023. [Online].

Available: https://www.ibm.com/topics/reinforcement-learning

Reinforcement Learning FAQ

- What is Reinforcement Learning?

Reinforcement Learning is a machine learning method where agents learn optimal actions through trial and error in dynamic settings. - How does an agent interact with the environment?

The agent observes the environment’s state, takes actions, and receives rewards or penalties to guide future decisions. - Why is Reinforcement Learning useful for adaptive systems?

It excels in unforeseeable environments without needing labeled data, relying solely on experience. - What role does the environment play?

The environment provides states and feedback (rewards) to the agent based on its actions. - What is a policy in this context?

A policy maps states to actions, helping the agent decide what to do next. - How do value functions work?

Value functions estimate long-term rewards from a state or action sequence. - What defines success for an agent?

Maximizing cumulative rewards over time, not just immediate gains. - What is the exploration-exploitation trade-off?

Agents balance trying new actions (exploration) with using known high-reward ones (exploitation). - Name a popular Reinforcement Learning algorithm.

Q-learning, a value-based method that updates action-value estimates iteratively. - What are actor-critic methods?

They combine policy optimization (actor) with value estimation (critic) for efficient learning. - How is it used in robotics?

Agents learn motor skills and navigation by interacting with physical environments. - What about finance?

It optimizes portfolios and trading by adapting to market dynamics. - Can it improve healthcare?

Yes, by personalizing treatment plans through patient outcome feedback. - Examples in tech?

Self-driving cars, recommendation systems, and superhuman game AI. - What are its main strengths?

Handles sequential decisions in rule-free, changing environments. - What are common limitations?

High computational cost, poor reward design risks, and interpretability issues. - Why no labeled data needed?

Learning comes from real-time rewards in the environment. - How does the environment influence training?

Dynamic environments require constant adaptation via rewards. - Difference from supervised learning?

No pre-labeled examples; focuses on delayed, cumulative rewards. - Future of Reinforcement Learning?

Powers self-adaptive AI across industries, evolving with better algorithms.

Penned by Ujjwal

Edited by Komal Rohilla, Research Analyst

For any feedback mail us at [email protected]

Streamline Your Hiring with Eve Placement’s Custom Assessments

Eve Placement helps you engage, assess, and recruit top talent through tailored hiring challenges that go beyond resumes. From technical quizzes and real-world case studies to psychometric evaluations and audio/video submissions, our platform enables smarter, data-driven hiring decisions. Advanced security features ensure authenticity and eliminate fraud, giving you reliable results. Ready to hire better? Know More.

Mail us at [email protected]