Model Evaluation Metrics:Measures for Machine Learning Performance

Topics: model evaluation, performance metrics

Introduction

Model Evaluation is an important step of the process of machine learning, and how well a trained system performs on unseen data can be determined by the use of Model Evaluation. Proper use of Model Evaluation leads to systems with accurate predictions for real-world use. Very closely associated with the use of Model Evaluation is the use of Performance Metrics. This association arises from the fact that the appropriate use of Performance Metrics is necessary because different problems at different times may call for different perspectives with respect to the evaluation of a problem.

Why Model Evaluation in Machine Learning is Important

Model assessment is one of the key elements in confirming if the machine learning model can generalise well on data outside the training dataset. A machine learning model that performs well on the training data set but not on unseen data is called overfitting, while underfitting happens if the model fails to find the patterns.

Practitioners are able to, via evaluation, recognise these factors earlier and optimise design, choice of factors, or learning approaches. Evaluation further enables an unbiased comparison between models in situations where there are various models available.

Classification Evaluation Metrics

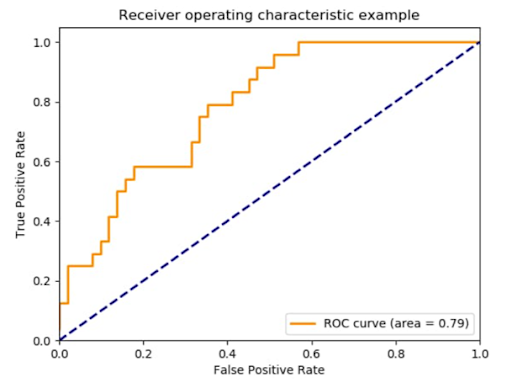

For classification problems, the goal of evaluation is the ability to predict instances within certain classes of data. Metrics used include Accuracy, Precision, Recall, and F1. Accuracy is the rate of correct classifications made. It is not an ideal metric when dealing with unbalanced classes.

Precision answers how accurately predicted positives are determined to be actual positives, while a recall measures how many actual positives are correctly labelled. F1-score, also called the F-score, equally measures the accuracy of both false positives and false negatives. Additionally, confusion matrices help analyse errors.

Regression Evaluation Metrics

Regression algorithms forecast continuous outcomes and demand different methods of assessment. Mean Absolute Error (MAE), a metric that computes the average absolute difference between the forecasts and the observations, is readily interpretable. Mean Squared Error (MSE) and Root Mean Squared Error (RMSE) have a higher penalty for larger errors and are helpful when large errors are highly undesired.

The R-squared value gives the proportion of the variability in the data that the model accounts for. Though a popular measure, the value of R-squared must often be interpreted with care, particularly when comparing the values of different models that have differing numbers of predictors.

Validation Techniques

Having genuine metrics for evaluation is not enough, but requires proper validation methods. Splitting into train and test sets, cross-validation, and stratified sampling ensure that the results of evaluation are free from any bias and represent the real case. Cross-validation is even better.

These methods are useful for determining model stability and preventing the formation of incorrect conclusions based on random partitioning of the data. A stable framework of evaluation is necessary for machine learning algorithms to work well in real-world environments.

Difficulties Associated with Choosing the Correct Metrics

The choice of the evaluation metric depends on the objectives of the business, the characteristics of the data, and the tolerable level of risk. In a given problem, it might be more important to reduce the number of false negatives rather than the number of false positives. This might work the other way round as well.

Conclusion

In conclusion, it is imperative to note that Model Evaluation is a backbone component in developing a trustworthy and efficient machine learning system. By employing effective Performance Metrics, it is possible to ensure that a machine learning system is not only accurate but also justified by real-world objectives and constraints.

References

[1] C. M. Bishop, Pattern Recognition and Machine Learning. New York, NY, USA: Springer, 2006.[Online].

Available: https://link.springer.com/book/10.1007/978-0-387-45528-0

[2] A. Géron, Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow, 2nd ed. Sebastopol,CA,USA: O’Reilly Media, 2019. [Online].

Available: https://www.oreilly.com/library/view/hands-on-machine-learning/9781492032632/

[3] IBM Documentation, “Machine Learning Model Evaluation Metrics,” IBM, 2023. [Online].

Available: https://www.ibm.com/docs/en/watsonx/saas?topic=models-evaluating-machine-learning-models

FAQs

1. What is model evaluation in machine learning?

Model evaluation is the process of measuring how well a machine learning model performs on unseen data using predefined criteria.

2. Why are performance metrics important in machine learning?

Performance metrics help quantify prediction quality and allow practitioners to compare models objectively.

3. How does model evaluation help prevent overfitting?

By testing models on unseen data, evaluation reveals whether a model generalizes well or only memorizes training data.

4. Which metrics are commonly used for classification problems?

Accuracy, precision, recall, F1-score, and confusion matrices are widely used to assess classification models.

5. Why is accuracy not always a reliable metric?

Accuracy can be misleading when datasets are imbalanced, as it may hide poor performance on minority classes.

6. What metrics are used to evaluate regression models?

Regression models are typically assessed using MAE, MSE, RMSE, and R-squared values.

7. How does cross-validation improve evaluation reliability?

Cross-validation reduces bias by testing models across multiple data splits, leading to more stable results.

8. Can one performance metric fit all machine learning problems?

No, the choice of metric depends on business goals, data characteristics, and acceptable risk levels.

9. What role does business context play in choosing metrics?

Business priorities determine whether minimizing false positives or false negatives is more important.

10. Why is model evaluation essential for real-world deployment?

Proper evaluation ensures models are accurate, reliable, and aligned with real-world constraints before deployment.

Penned by Ujjwal

Edited by Komal Rohilla, Research Analyst

For any feedback mail us at [email protected]

Streamline Your Hiring with Eve Placement’s Custom Assessments

Eve Placement helps you engage, assess, and recruit top talent through tailored hiring challenges that go beyond resumes. From technical quizzes and real-world case studies to psychometric evaluations and audio/video submissions, our platform enables smarter, data-driven hiring decisions. Advanced security features ensure authenticity and eliminate fraud, giving you reliable results. Ready to hire better? Know More.

Mail us at [email protected]